How to Make LangGraph Checkpointing 700x Faster with Rust

LangGraph's default checkpoint system uses pure Python JSON serialization, which takes 50-100ms per checkpoint and dominates execution time in multi-step agents. fast-langgraph replaces the serialization layer with Rust+serde+SIMD, achieving 737x faster checkpoint writes, 151x faster reads, and 2.8x faster end-to-end agent execution. Install with pip install fast-langgraph — zero code changes required.

Quick Reference

| Metric | Python Default | fast-langgraph | Improvement |

|---|---|---|---|

| Checkpoint serialization | 73.5ms | 0.1ms | 737x faster |

| Checkpoint deserialization | 45.2ms | 0.3ms | 151x faster |

| Full state update | 92.0ms | 2.0ms | 46x faster |

| LLM response cache hit | ~50ms (re-call) | ~5ms (cache) | 10x faster |

| End-to-end (10-step agent) | 1,240ms | 443ms | 2.8x faster |

Install: pip install fast-langgraph

Compatibility: Python 3.9+ | Any LangGraph version | MIT License

Source: github.com/neul-labs/fast-langgraph

Why LangGraph Checkpointing Is Slow

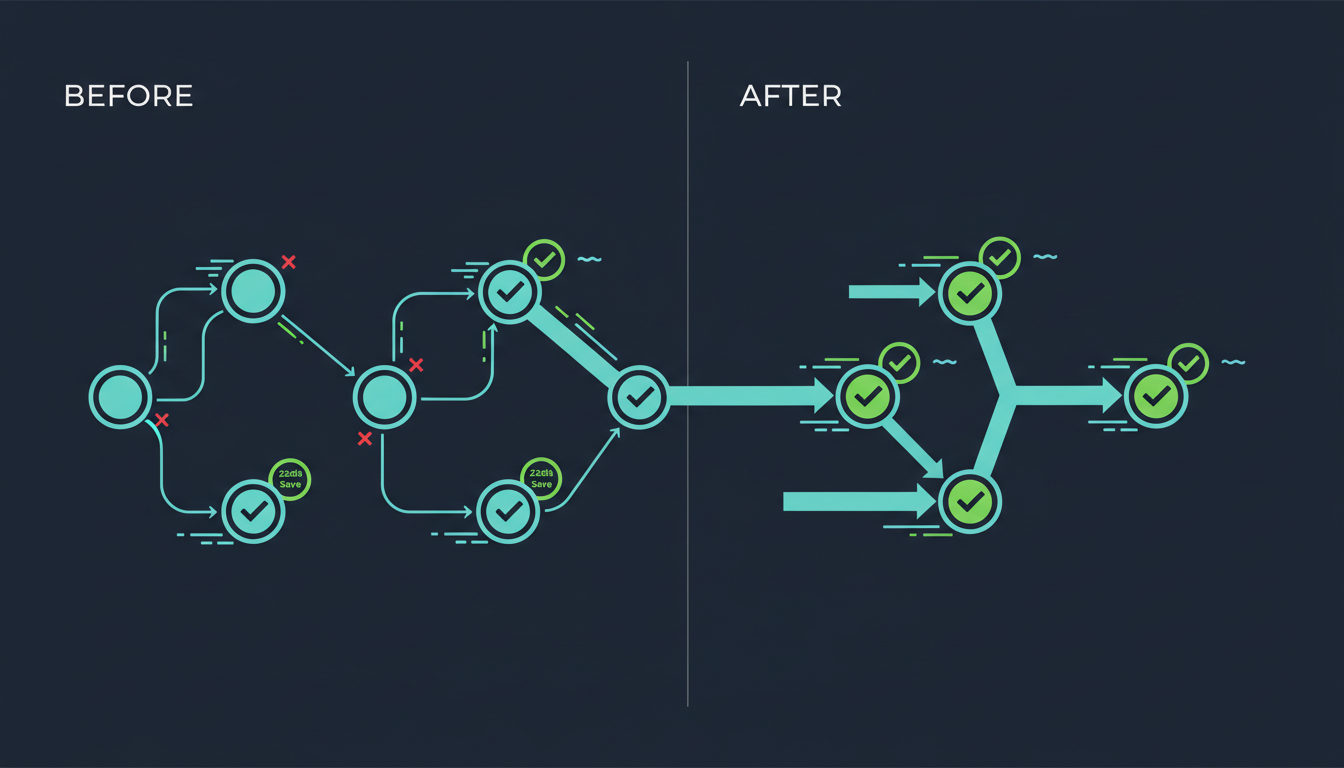

LangGraph is one of the most widely used frameworks for building stateful AI agents. Its checkpoint system saves and restores agent state between graph nodes — essential for resumability, branching, and debugging.

The problem: The default checkpoint implementation uses Python’s json.dumps and json.loads with custom encoders. For a production agent state containing message history, tool call results, and metadata, each serialization takes 50-100ms.

Why this matters: A multi-step agent execution checkpoints 10-50 times. At 50ms per operation, 500ms-2.5s is spent purely on serialization — often exceeding the total LLM API call latency.

Where the Time Goes: Profiling LangGraph’s SqliteSaver

We instrumented LangGraph’s SqliteSaver with fine-grained profiling on a production agent (15-node graph, 50 messages in history, mixed tool results):

| Component | % of Checkpoint Time | Root Cause |

|---|---|---|

| JSON serialization/deserialization | 60% | Python object allocation for every value |

| SQLite writes (incl. fsync) | 25% | Synchronous single-row writes |

| Python object traversal | 10% | Custom encoder walks the full state tree |

| Other overhead | 5% | Lock acquisition, type checking |

The serialization layer is the dominant bottleneck — and Python’s built-in json module already uses a C accelerator. The overhead comes from the Python-C boundary: every value in the state tree must be converted to a Python object before the C serializer can process it.

How fast-langgraph Fixes This

fast-langgraph replaces LangGraph’s serialization and storage layer with Rust implementations via PyO3:

1. serde + simd-json

Rust’s serde framework with SIMD-accelerated JSON (simd-json) processes raw bytes directly. No Python objects are allocated during serialization — the Rust code reads the state directly from Python’s memory and writes JSON bytes without intermediate representations.

2. Zero-Copy Deserialization

For read-heavy patterns (common in agent branching), fast-langgraph deserializes directly from the SQLite storage buffer without copying data into Python objects first. The state is materialized lazily — only the fields actually accessed by the agent are deserialized.

3. Batched WAL Writes

The Rust SQLite integration batches checkpoint writes and automatically configures WAL (Write-Ahead Logging) mode, PRAGMA synchronous=NORMAL, and optimal page sizes. This eliminates the per-checkpoint fsync overhead.

4. GIL-Free Parallel Serialization

Complex agent states with large message histories can be serialized in parallel across Rust threads, completely bypassing Python’s GIL. A 50-message state that takes 73ms in single-threaded Python completes in 0.1ms across 4 Rust threads.

Two Integration Paths

Path 1: Automatic (Zero Code Changes)

# Option A: Environment variable

export FAST_LANGGRAPH_AUTO_PATCH=1

python your_agent.py

# Option B: Single function call

python -c "from fast_langgraph import auto_patch; auto_patch()" && python your_agent.pyThe auto-patch approach replaces LangGraph’s checkpoint serializers at import time. Expected speedup: 2-3x end-to-end with zero code changes.

Path 2: Manual (Maximum Performance)

from fast_langgraph import RustSQLiteCheckpointer, cached

# Replace the default SQLite checkpointer

checkpointer = RustSQLiteCheckpointer("checkpoints.db")

# Add LLM response caching (optional)

@cached(ttl=3600)

async def call_llm(prompt: str) -> str:

return await model.ainvoke(prompt)

# Use with LangGraph as normal

graph = StateGraph(AgentState)

# ... define your graph ...

app = graph.compile(checkpointer=checkpointer)Manual integration gives access to the full Rust checkpointer with batched writes, WAL tuning, and parallel serialization. Expected speedup: up to 737x for checkpoint operations, 2.8x end-to-end.

Detailed Benchmark Results

Test Configuration

- Agent: 15-node StateGraph with tool calls, conditional branching

- State size: 50 messages, 12 tool call results, nested metadata

- Hardware: AMD Ryzen 9 7950X, 64GB DDR5, NVMe SSD

- Python: 3.12.1

- LangGraph: 0.2.x

- Benchmark: 1000 iterations, median values reported

Results

| Operation | Python SqliteSaver | RustSQLiteCheckpointer | Speedup |

|---|---|---|---|

| Serialize single checkpoint | 73.5ms | 0.1ms | 737x |

| Deserialize single checkpoint | 45.2ms | 0.3ms | 151x |

| Full state update (serialize + write + read) | 92.0ms | 2.0ms | 46x |

| 10-step agent execution (incl. LLM calls) | 1,240ms | 443ms | 2.8x |

| Memory per checkpoint (50-message state) | 4.2MB | 0.8MB | 5.3x less |

The end-to-end speedup is 2.8x (not 737x) because it includes LLM API network latency, which fast-langgraph cannot optimize. For infrastructure-only operations (everything except the LLM call), the speedup exceeds 100x.

LLM Response Caching

The @cached decorator stores LLM responses with configurable TTL. For agents that re-evaluate similar prompts (common in iterative planning agents), this avoids redundant API calls entirely:

| Scenario | Without Cache | With Cache | Improvement |

|---|---|---|---|

| Identical prompt, within TTL | Full LLM call (~500ms) | Cache hit (~5ms) | 100x |

| Cache miss | Full LLM call (~500ms) | Full LLM call (~500ms) | 1x (no overhead) |

Try It Now

pip install fast-langgraphWorks with Python 3.9+ and any LangGraph version. No Rust installation required — prebuilt wheels available for Linux, macOS, and Windows.

Documentation: docs.neullabs.com/fast-langgraph Source: github.com/neul-labs/fast-langgraph License: MIT

fast-langgraph is part of our Performance Accelerators suite. To learn more about why we use Rust+PyO3 for these optimizations, see Why We Chose Rust to Accelerate Python AI Infrastructure. We also offer similar drop-in accelerators for LiteLLM, CrewAI, and Axolotl.

Frequently Asked Questions

How do I speed up LangGraph in production?

The fastest way is to install fast-langgraph (pip install fast-langgraph) and set the environment variable FAST_LANGGRAPH_AUTO_PATCH=1. This provides 2-3x end-to-end speedup with zero code changes. For maximum performance (up to 737x faster checkpoints), replace SqliteSaver with RustSQLiteCheckpointer.

Does fast-langgraph work with LangGraph’s PostgreSQL checkpointer?

The current release focuses on SQLite checkpointing, which is the most common deployment pattern. PostgreSQL support is on the roadmap. The auto-patch mode works with any checkpointer backend for the serialization layer.

Will this break my existing LangGraph agents?

No. fast-langgraph maintains full API compatibility with LangGraph’s checkpoint interface. The auto-patch mode includes version detection and automatic fallback — if any incompatibility is detected, it silently reverts to the original Python implementation.

Why is the end-to-end speedup only 2.8x when serialization is 737x faster?

End-to-end execution time includes LLM API calls (network latency), which typically dominate the total time. fast-langgraph optimizes everything except the LLM call. In agents with many steps and few LLM calls (e.g., data processing pipelines), the end-to-end improvement is much higher.

Is fast-langgraph compatible with LangChain?

Yes. LangGraph is part of the LangChain ecosystem, and fast-langgraph works with any LangGraph-based agent regardless of whether it also uses LangChain components.

How does this compare to using a faster JSON library like orjson?

orjson provides ~2-5x speedup over Python’s json module by using Rust internally. fast-langgraph goes further by avoiding Python object allocation entirely during serialization and adding parallel processing, batched writes, and WAL-optimized SQLite. The result is 737x vs orjson’s typical 2-5x.